1. Introduction

One of the core benefits of Java is the automated memory management with the help of the built-in Garbage Collector (or GC for short). The GC implicitly takes care of allocating and freeing up memory and thus is capable of handling the majority of the memory leak issues.

While the GC effectively handles a good portion of memory, it doesn’t guarantee a foolproof solution to memory leaking. The GC is pretty smart, but not flawless. Memory leaks can still sneak up even in applications of a conscientious developer.

There still might be situations where the application generates a substantial number of superfluous objects, thus depleting crucial memory resources, sometimes resulting in the whole application’s failure.

Memory leaks are a genuine problem in Java. In this tutorial, we’ll see what the potential causes of memory leaks are, how to recognize them at runtime, and how to deal with them in our application.

2. What Is a Memory Leak

A Memory Leak is a situation when there are objects present in the heap that are no longer used, but the garbage collector is unable to remove them from memory and, thus they are unnecessarily maintained.

A memory leak is bad because it blocks memory resources and degrades system performance over time. And if not dealt with, the application will eventually exhaust its resources, finally terminating with a fatal java.lang.OutOfMemoryError.

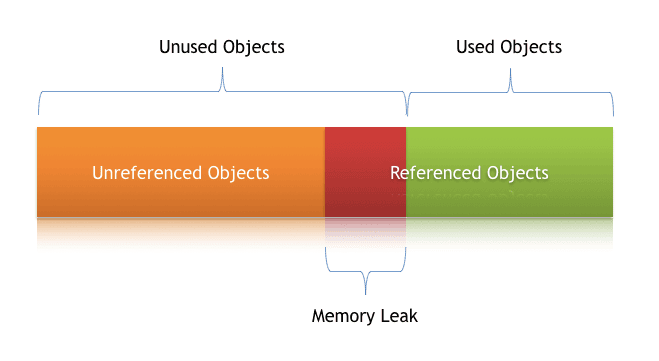

There are two different types of objects that reside in Heap memory — referenced and unreferenced. Referenced objects are those who have still active references within the application whereas unreferenced objects don’t have any active references.

The garbage collector removes unreferenced objects periodically, but it never collects the objects that are still being referenced. This is where memory leaks can occur:

Symptoms of a Memory Leak

- Severe performance degradation when the application is continuously running for a long time

- OutOfMemoryError heap error in the application

- Spontaneous and strange application crashes

- The application is occasionally running out of connection objects

Let’s have a closer look at some of these scenarios and how to deal with them.

3. Types of Memory Leaks in Java

In any application, memory leaks can occur for numerous reasons. In this section, we’ll discuss the most common ones.

3.1. Memory Leak Through static Fields

The first scenario that can cause a potential memory leak is heavy use of static variables.

In Java, static fields have a life that usually matches the entire lifetime of the running application (unless ClassLoader becomes eligible for garbage collection).

Let’s create a simple Java program that populates a static List:

public class StaticTest {

public static List<Double> list = new ArrayList<>();

public void populateList() {

for (int i = 0; i < 10000000; i++) {

list.add(Math.random());

}

Log.info("Debug Point 2");

}

public static void main(String[] args) {

Log.info("Debug Point 1");

new StaticTest().populateList();

Log.info("Debug Point 3");

}

}

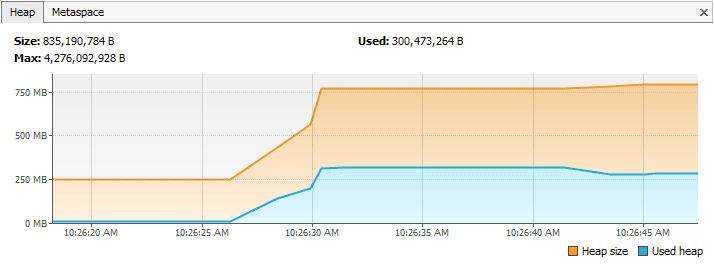

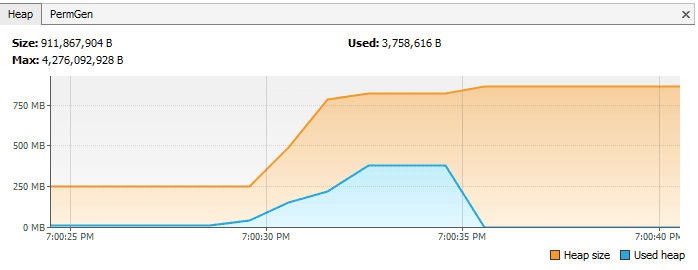

Now if we analyze the Heap memory during this program execution, then we’ll see that between debug points 1 and 2, as expected, the heap memory increased.

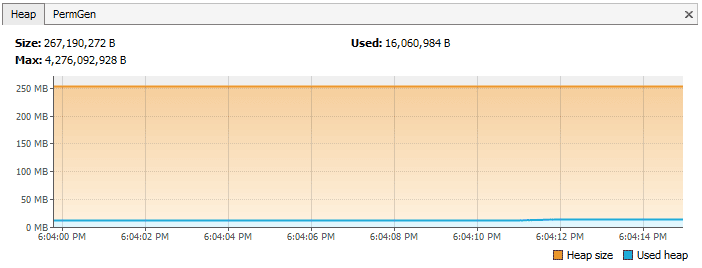

But when we leave the populateList() method at the debug point 3, the heap memory isn’t yet garbage collected as we can see in this VisualVM response:

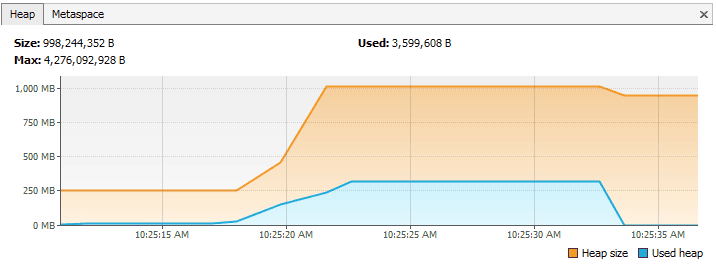

However, in the above program, in line number 2, if we just drop the keyword static, then it will bring a drastic change to the memory usage, this Visual VM response shows:

The first part until the debug point is almost the same as what we obtained in the case of static. But this time after we leave the populateList() method, all the memory of the list is garbage collected because we don’t have any reference to it.

Hence we need to pay very close attention to our usage of static variables. If collections or large objects are declared as static, then they remain in the memory throughout the lifetime of the application, thus blocking the vital memory that could otherwise be used elsewhere.

How to Prevent It?

- Minimize the use of static variables

- When using singletons, rely upon an implementation that lazily loads the object instead of eagerly loading

3.2. Through Unclosed Resources

Whenever we make a new connection or open a stream, the JVM allocates memory for these resources. A few examples include database connections, input streams, and session objects.

Forgetting to close these resources can block the memory, thus keeping them out of the reach of GC. This can even happen in case of an exception that prevents the program execution from reaching the statement that’s handling the code to close these resources.

In either case, the open connection left from resources consumes memory, and if we don’t deal with them, they can deteriorate performance and may even result in OutOfMemoryError.

How to Prevent It?

- Always use finally block to close resources

- The code (even in the finally block) that closes the resources should not itself have any exceptions

- When using Java 7+, we can make use of try-with-resources block

3.3. Improper equals() and hashCode() Implementations

When defining new classes, a very common oversight is not writing proper overridden methods for equals() and hashCode() methods.

HashSet and HashMap use these methods in many operations, and if they’re not overridden correctly, then they can become a source for potential memory leak problems.

Let’s take an example of a trivial Person class and use it as a key in a HashMap:

public class Person {

public String name;

public Person(String name) {

this.name = name;

}

}

Now we’ll insert duplicate Person objects into a Map that uses this key.

Remember that a Map cannot contain duplicate keys:

@Test

public void givenMap_whenEqualsAndHashCodeNotOverridden_thenMemoryLeak() {

Map<Person, Integer> map = new HashMap<>();

for(int i=0; i<100; i++) {

map.put(new Person("jon"), 1);

}

Assert.assertFalse(map.size() == 1);

}

Here we’re using Person as a key. Since Map doesn’t allow duplicate keys, the numerous duplicate Person objects that we’ve inserted as a key shouldn’t increase the memory.

But since we haven’t defined proper equals() method, the duplicate objects pile up and increase the memory, that’s why we see more than one object in the memory. The Heap Memory in VisualVM for this looks like:

However, if we had overridden the equals() and hashCode() methods properly, then there would only exist one Person object in this Map.

Let’s take a look at proper implementations of equals() and hashCode() for our Person class:

public class Person {

public String name;

public Person(String name) {

this.name = name;

}

@Override

public boolean equals(Object o) {

if (o == this) return true;

if (!(o instanceof Person)) {

return false;

}

Person person = (Person) o;

return person.name.equals(name);

}

@Override

public int hashCode() {

int result = 17;

result = 31 * result + name.hashCode();

return result;

}

}

And in this case, the following assertions would be true:

@Test

public void givenMap_whenEqualsAndHashCodeNotOverridden_thenMemoryLeak() {

Map<Person, Integer> map = new HashMap<>();

for(int i=0; i<2; i++) {

map.put(new Person("jon"), 1);

}

Assert.assertTrue(map.size() == 1);

}

After properly overriding equals() and hashCode(), the Heap Memory for the same program looks like:

Another example is of using an ORM tool like Hibernate, which uses equals() and hashCode() methods to analyze the objects and saves them in the cache.

The chances of memory leak are quite high if these methods are not overridden because Hibernate then wouldn’t be able to compare objects and would fill its cache with duplicate objects.

How to Prevent It?

- As a rule of thumb, when defining new entities, always override equals() and hashCode() methods

- It’s not just enough to override, but these methods must be overridden in an optimal way as well

For more information, visit our tutorials Generate equals() and hashCode() with Eclipse and Guide to hashCode() in Java.

3.4. Inner Classes That Reference Outer Classes

This happens in the case of non-static inner classes (anonymous classes). For initialization, these inner classes always require an instance of the enclosing class.

Every non-static Inner Class has, by default, an implicit reference to its containing class. If we use this inner class’ object in our application, then even after our containing class’ object goes out of scope, it will not be garbage collected.

Consider a class that holds the reference to lots of bulky objects and has a non-static inner class. Now when we create an object of just the inner class, the memory model looks like:

However, if we just declare the inner class as static, then the same memory model looks like this:

This happens because the inner class object implicitly holds a reference to the outer class object, thereby making it an invalid candidate for garbage collection. The same happens in the case of anonymous classes.

How to Prevent It?

- If the inner class doesn’t need access to the containing class members, consider turning it into a static class

3.5. Through finalize() Methods

Use of finalizers is yet another source of potential memory leak issues. Whenever a class’ finalize() method is overridden, then objects of that class aren’t instantly garbage collected. Instead, the GC queues them for finalization, which occurs at a later point in time.

Additionally, if the code written in finalize() method is not optimal and if the finalizer queue cannot keep up with the Java garbage collector, then sooner or later, our application is destined to meet an OutOfMemoryError.

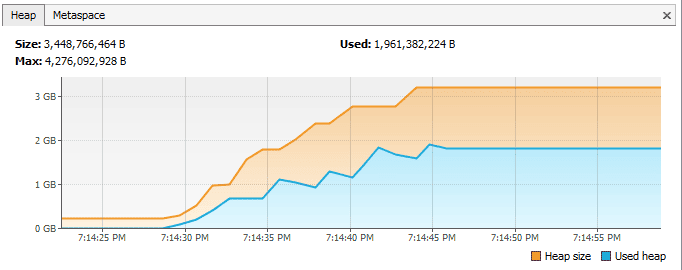

To demonstrate this, let’s consider that we have a class for which we have overridden the finalize() method and that the method takes a little bit of time to execute. When a large number of objects of this class gets garbage collected, then in VisualVM, it looks like:

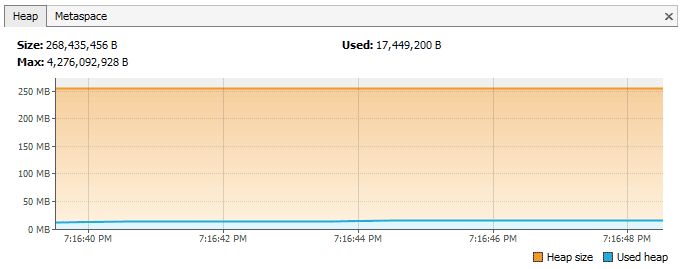

However, if we just remove the overridden finalize() method, then the same program gives the following response:

How to Prevent It?

- We should always avoid finalizers

For more detail about finalize(), read section 3 (Avoiding Finalizers) in our Guide to the finalize Method in Java.

3.6. Interned Strings

The Java String pool had gone through a major change in Java 7 when it was transferred from PermGen to HeapSpace. But for applications operating on version 6 and below, we should be more attentive when working with large Strings.

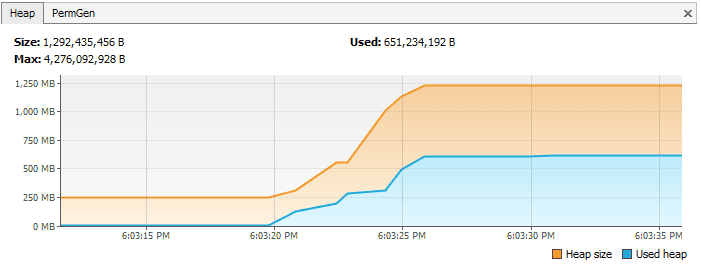

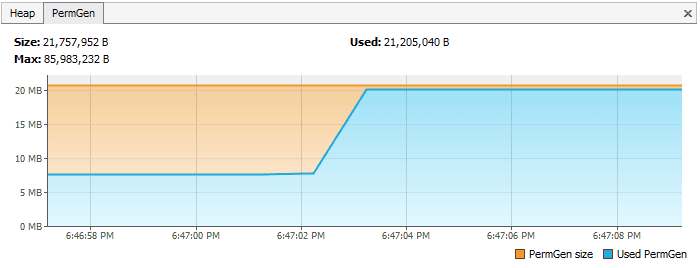

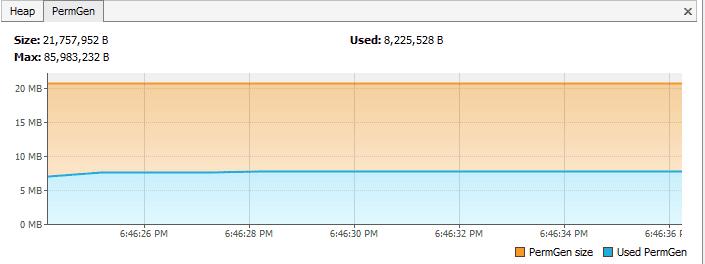

If we read a huge massive String object, and call intern() on that object, then it goes to the string pool, which is located in PermGen (permanent memory) and will stay there as long as our application runs. This blocks the memory and creates a major memory leak in our application.

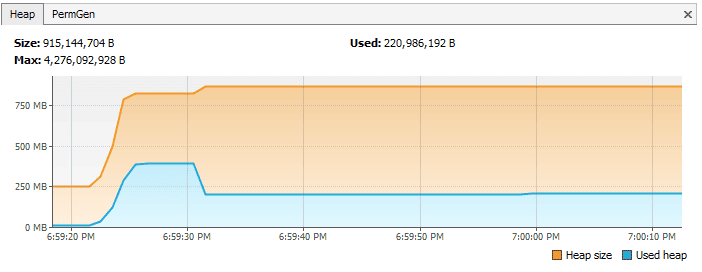

The PermGen for this case in JVM 1.6 looks like this in VisualVM:

In contrast to this, in a method, if we just read a string from a file and do not intern it, then the PermGen looks like:

How to Prevent It?

- The simplest way to resolve this issue is by upgrading to latest Java version as String pool is moved to HeapSpace from Java version 7 onwards

- If working on large Strings, increase the size of the PermGen space to avoid any potential OutOfMemoryErrors:

-XX:MaxPermSize=512m

3.7. Using ThreadLocals

ThreadLocal (discussed in detail in Introduction to ThreadLocal in Java tutorial) is a construct that gives us the ability to isolate state to a particular thread and thus allows us to achieve thread safety.

When using this construct, each thread will hold an implicit reference to its copy of a ThreadLocal variable and will maintain its own copy, instead of sharing the resource across multiple threads, as long as the thread is alive.

Despite its advantages, the use of ThreadLocal variables is controversial, as they are infamous for introducing memory leaks if not used properly. Joshua Bloch once commented on thread local usage:

“Sloppy use of thread pools in combination with sloppy use of thread locals can cause unintended object retention, as has been noted in many places. But placing the blame on thread locals is unwarranted.”

Memory leaks with ThreadLocals

ThreadLocals are supposed to be garbage collected once the holding thread is no longer alive. But the problem arises when ThreadLocals are used along with modern application servers.

Modern application servers use a pool of threads to process requests instead of creating new ones (for example the Executor in case of Apache Tomcat). Moreover, they also use a separate classloader.

Since Thread Pools in application servers work on the concept of thread reuse, they are never garbage collected — instead, they’re reused to serve another request.

Now, if any class creates a ThreadLocal variable but doesn’t explicitly remove it, then a copy of that object will remain with the worker Thread even after the web application is stopped, thus preventing the object from being garbage collected.

How to Prevent It?

- It’s a good practice to clean-up ThreadLocals when they’re no longer used — ThreadLocals provide the remove() method, which removes the current thread’s value for this variable

- Do not use ThreadLocal.set(null) to clear the value — it doesn’t actually clear the value but will instead look up the Map associated with the current thread and set the key-value pair as the current thread and null respectively

- It’s even better to consider ThreadLocal as a resource that needs to be closed in a finally block just to make sure that it is always closed, even in the case of an exception:

try { threadLocal.set(System.nanoTime()); //... further processing } finally { threadLocal.remove(); }

4. Other Strategies for Dealing with Memory Leaks

Although there is no one-size-fits-all solution when dealing with memory leaks, there are some ways by which we can minimize these leaks.

4.1. Enable Profiling

Java profilers are tools that monitor and diagnose the memory leaks through the application. They analyze what’s going on internally in our application — for example, how memory is allocated.

Using profilers, we can compare different approaches and find areas where we can optimally use our resources.

We have used Java VisualVM throughout section 3 of this tutorial. Please check out our Guide to Java Profilers to learn about different types of profilers, like Mission Control, JProfiler, YourKit, Java VisualVM, and the Netbeans Profiler.

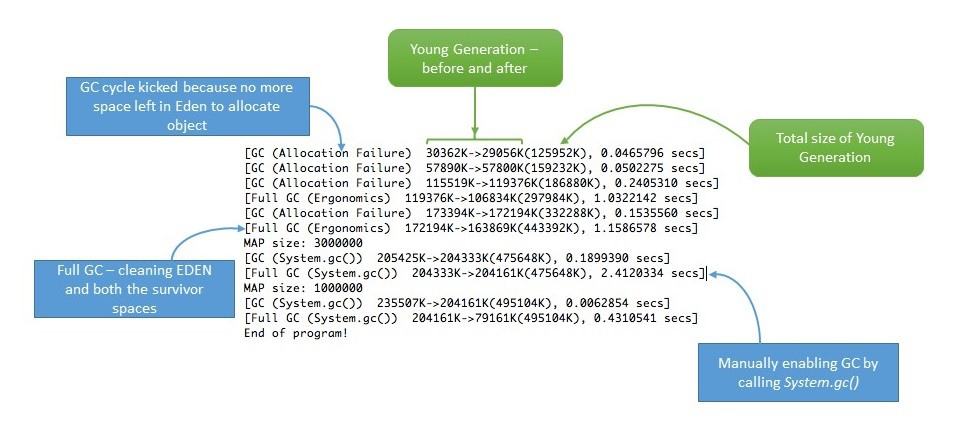

4.2. Verbose Garbage Collection

By enabling verbose garbage collection, we’re tracking detailed trace of the GC. To enable this, we need to add the following to our JVM configuration:

-verbose:gc

By adding this parameter, we can see the details of what’s happening inside GC:

4.3. Use Reference Objects to Avoid Memory Leaks

We can also resort to reference objects in Java that comes in-built with java.lang.ref package to deal with memory leaks. Using java.lang.ref package, instead of directly referencing objects, we use special references to objects that allow them to be easily garbage collected.

Reference queues are designed for making us aware of actions performed by the Garbage Collector. For more information, read Soft References in Java Baeldung tutorial, specifically section 4.

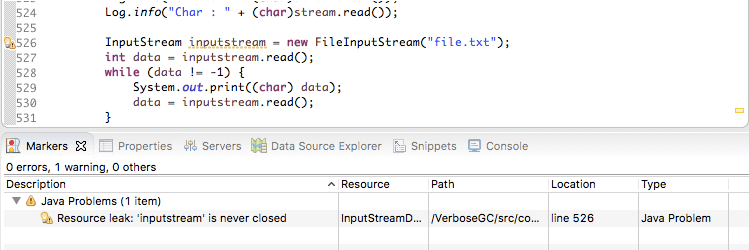

4.4. Eclipse Memory Leak Warnings

For projects on JDK 1.5 and above, Eclipse shows warnings and errors whenever it encounters obvious cases of memory leaks. So when developing in Eclipse, we can regularly visit the “Problems” tab and be more vigilant about memory leak warnings (if any):

4.5. Benchmarking

We can measure and analyze the Java code’s performance by executing benchmarks. This way, we can compare the performance of alternative approaches to do the same task. This can help us choose a better approach and may help us to conserve memory.

For more information about benchmarking, please head over to our Microbenchmarking with Java tutorial.

4.6. Code Reviews

Finally, we always have the classic, old-school way of doing a simple code walk-through.

In some cases, even this trivial looking method can help in eliminating some common memory leak problems.

5. Conclusion

In layman’s terms, we can think of memory leak as a disease that degrades our application’s performance by blocking vital memory resources. And like all other diseases, if not cured, it can result in fatal application crashes over time.

Memory leaks are tricky to solve and finding them requires intricate mastery and command over the Java language. While dealing with memory leaks, there is no one-size-fits-all solution, as leaks can occur through a wide range of diverse events.

However, if we resort to best practices and regularly perform rigorous code walk-throughs and profiling, then we can minimize the risk of memory leaks in our application.

As always, the code snippets used to generate the VisualVM responses depicted in this tutorial are available on GitHub.